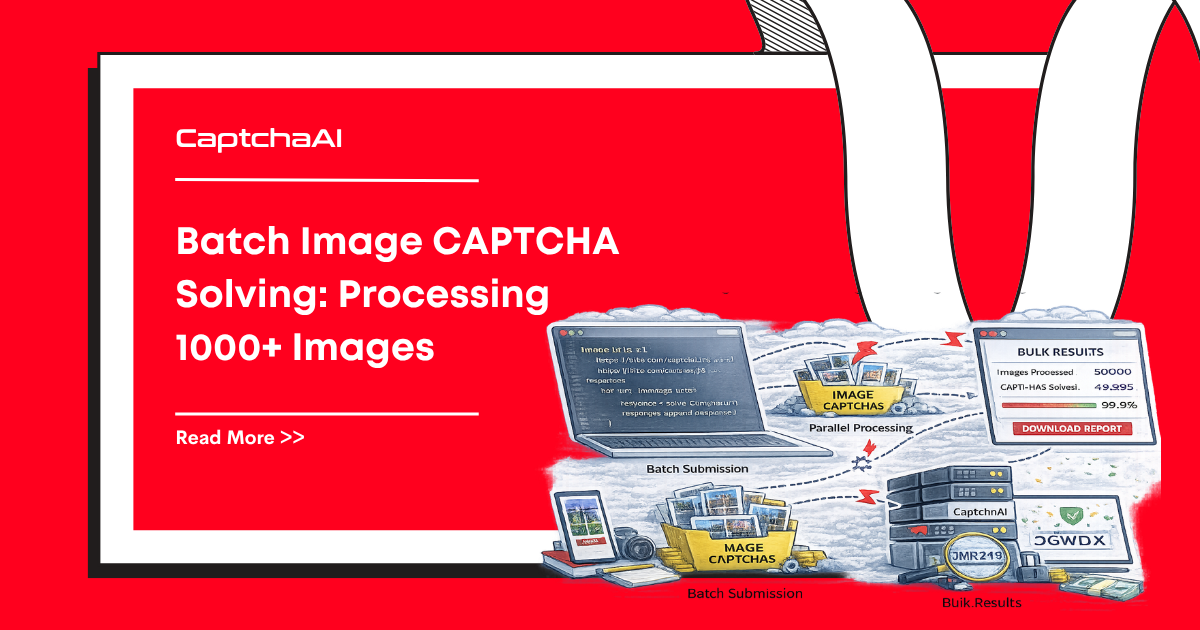

When you need to solve hundreds or thousands of image CAPTCHAs, sequential processing is too slow. This guide shows how to build a batch processing pipeline that submits, polls, and collects results for 1000+ images concurrently using CaptchaAI.

Architecture

[Image Queue] → [Submit Workers] → [Poll Workers] → [Results Store]

↓ ↓ ↓ ↓

1000 images 20 concurrent Adaptive poll CSV/JSON output

submits intervals

Python: Async batch processor

import asyncio

import aiohttp

import base64

import json

import time

import csv

from pathlib import Path

API_KEY = "YOUR_API_KEY"

SUBMIT_URL = "https://ocr.captchaai.com/in.php"

RESULT_URL = "https://ocr.captchaai.com/res.php"

MAX_CONCURRENT_SUBMITS = 20

MAX_CONCURRENT_POLLS = 30

POLL_INTERVAL = 5

async def submit_image(session, sem, image_path):

"""Submit a single image CAPTCHA."""

async with sem:

with open(image_path, "rb") as f:

img_b64 = base64.b64encode(f.read()).decode()

data = {

"key": API_KEY,

"method": "base64",

"body": img_b64,

"json": "1",

}

async with session.post(SUBMIT_URL, data=data) as resp:

result = await resp.json()

if result["status"] != 1:

return {"file": str(image_path), "error": result["request"]}

return {

"file": str(image_path),

"task_id": result["request"],

"submitted_at": time.time(),

}

async def poll_result(session, sem, task):

"""Poll for a single task result."""

async with sem:

for attempt in range(24):

await asyncio.sleep(POLL_INTERVAL)

params = {

"key": API_KEY,

"action": "get",

"id": task["task_id"],

"json": "1",

}

async with session.get(RESULT_URL, params=params) as resp:

result = await resp.json()

if result["status"] == 1:

return {

"file": task["file"],

"task_id": task["task_id"],

"answer": result["request"],

"solve_time": time.time() - task["submitted_at"],

}

if result["request"] != "CAPCHA_NOT_READY":

return {

"file": task["file"],

"task_id": task["task_id"],

"error": result["request"],

}

return {

"file": task["file"],

"task_id": task["task_id"],

"error": "TIMEOUT",

}

async def process_batch(image_dir, output_file="results.csv"):

"""Process all images in a directory."""

image_paths = sorted(Path(image_dir).glob("*.png")) + \

sorted(Path(image_dir).glob("*.jpg"))

print(f"Found {len(image_paths)} images")

submit_sem = asyncio.Semaphore(MAX_CONCURRENT_SUBMITS)

poll_sem = asyncio.Semaphore(MAX_CONCURRENT_POLLS)

async with aiohttp.ClientSession() as session:

# Phase 1: Submit all images

print("Submitting...")

submit_tasks = [

submit_image(session, submit_sem, path)

for path in image_paths

]

submissions = await asyncio.gather(*submit_tasks)

# Separate successes and errors

pending = [s for s in submissions if "task_id" in s]

errors = [s for s in submissions if "error" in s]

print(f"Submitted: {len(pending)}, Errors: {len(errors)}")

# Phase 2: Poll all pending tasks

print("Polling for results...")

poll_tasks = [

poll_result(session, poll_sem, task)

for task in pending

]

results = await asyncio.gather(*poll_tasks)

# Combine results

all_results = results + errors

# Write to CSV

with open(output_file, "w", newline="") as f:

writer = csv.DictWriter(f, fieldnames=[

"file", "task_id", "answer", "solve_time", "error"

])

writer.writeheader()

for r in all_results:

writer.writerow({

"file": r.get("file", ""),

"task_id": r.get("task_id", ""),

"answer": r.get("answer", ""),

"solve_time": round(r.get("solve_time", 0), 2),

"error": r.get("error", ""),

})

solved = sum(1 for r in results if "answer" in r)

failed = sum(1 for r in results if "error" in r)

print(f"Done: {solved} solved, {failed} failed, {len(errors)} submit errors")

print(f"Results saved to {output_file}")

# Run

asyncio.run(process_batch("./captcha_images"))

Expected output:

Found 1000 images

Submitting...

Submitted: 997, Errors: 3

Polling for results...

Done: 985 solved, 12 failed, 3 submit errors

Results saved to results.csv

Node.js: Worker pool batch processor

const axios = require('axios');

const fs = require('fs');

const path = require('path');

const { createObjectCsvWriter } = require('csv-writer');

const API_KEY = 'YOUR_API_KEY';

const SUBMIT_URL = 'https://ocr.captchaai.com/in.php';

const RESULT_URL = 'https://ocr.captchaai.com/res.php';

const MAX_CONCURRENT = 20;

const POLL_INTERVAL_MS = 5000;

class BatchProcessor {

constructor(concurrency = MAX_CONCURRENT) {

this.concurrency = concurrency;

this.results = [];

this.processed = 0;

this.total = 0;

}

async submitImage(imagePath) {

const imgBase64 = fs.readFileSync(imagePath, { encoding: 'base64' });

const resp = await axios.post(SUBMIT_URL, null, {

params: {

key: API_KEY,

method: 'base64',

body: imgBase64,

json: 1,

},

});

if (resp.data.status !== 1) {

throw new Error(resp.data.request);

}

return resp.data.request;

}

async pollResult(taskId) {

for (let i = 0; i < 24; i++) {

await new Promise(r => setTimeout(r, POLL_INTERVAL_MS));

const resp = await axios.get(RESULT_URL, {

params: { key: API_KEY, action: 'get', id: taskId, json: 1 },

});

if (resp.data.status === 1) return resp.data.request;

if (resp.data.request !== 'CAPCHA_NOT_READY') {

throw new Error(resp.data.request);

}

}

throw new Error('TIMEOUT');

}

async processOne(imagePath) {

const startTime = Date.now();

try {

const taskId = await this.submitImage(imagePath);

const answer = await this.pollResult(taskId);

this.processed++;

const elapsed = ((Date.now() - startTime) / 1000).toFixed(1);

console.log(`[${this.processed}/${this.total}] ${path.basename(imagePath)}: ${answer} (${elapsed}s)`);

return { file: imagePath, answer, solveTime: elapsed, error: '' };

} catch (err) {

this.processed++;

return { file: imagePath, answer: '', solveTime: 0, error: err.message };

}

}

async run(imageDir, outputFile = 'results.csv') {

const files = fs.readdirSync(imageDir)

.filter(f => /\.(png|jpg|jpeg|gif)$/i.test(f))

.map(f => path.join(imageDir, f));

this.total = files.length;

console.log(`Processing ${this.total} images with ${this.concurrency} workers`);

// Process in chunks

for (let i = 0; i < files.length; i += this.concurrency) {

const chunk = files.slice(i, i + this.concurrency);

const chunkResults = await Promise.all(

chunk.map(f => this.processOne(f))

);

this.results.push(...chunkResults);

}

// Write CSV

const csvWriter = createObjectCsvWriter({

path: outputFile,

header: [

{ id: 'file', title: 'File' },

{ id: 'answer', title: 'Answer' },

{ id: 'solveTime', title: 'Solve Time (s)' },

{ id: 'error', title: 'Error' },

],

});

await csvWriter.writeRecords(this.results);

const solved = this.results.filter(r => r.answer).length;

console.log(`Done: ${solved}/${this.total} solved. Results: ${outputFile}`);

}

}

const processor = new BatchProcessor(20);

processor.run('./captcha_images');

Rate-aware batching

Avoid 429 errors by tracking your submission rate:

class RateLimiter:

def __init__(self, max_per_second=10):

self.max_per_second = max_per_second

self.timestamps = []

async def acquire(self):

now = time.time()

self.timestamps = [t for t in self.timestamps if now - t < 1.0]

if len(self.timestamps) >= self.max_per_second:

wait = 1.0 - (now - self.timestamps[0])

if wait > 0:

await asyncio.sleep(wait)

self.timestamps.append(time.time())

# Use in submit loop

rate_limiter = RateLimiter(max_per_second=10)

async def submit_with_rate_limit(session, image_path):

await rate_limiter.acquire()

# ... submit as before

Progress tracking

import sys

class ProgressTracker:

def __init__(self, total):

self.total = total

self.completed = 0

self.solved = 0

self.failed = 0

self.start_time = time.time()

def update(self, success=True):

self.completed += 1

if success:

self.solved += 1

else:

self.failed += 1

elapsed = time.time() - self.start_time

rate = self.completed / elapsed if elapsed > 0 else 0

eta = (self.total - self.completed) / rate if rate > 0 else 0

sys.stdout.write(

f"\r[{self.completed}/{self.total}] "

f"Solved: {self.solved} | Failed: {self.failed} | "

f"Rate: {rate:.1f}/s | ETA: {eta:.0f}s"

)

sys.stdout.flush()

Troubleshooting

| Problem | Cause | Fix |

|---|---|---|

| 429 responses | Too many concurrent requests | Reduce MAX_CONCURRENT_SUBMITS, add rate limiter |

| Many timeouts | Polling too short or images too complex | Increase poll attempts or poll interval |

ERROR_ZERO_BALANCE mid-batch |

Balance ran out | Check balance before starting; estimate cost |

| High error rate | Corrupt or oversized images | Validate images before submission |

FAQ

How many images can I submit concurrently?

20-30 concurrent submissions works well. Above that, you risk hitting rate limits. Use a semaphore to cap concurrency.

How much does 1000 images cost?

Check your current rate at captchaai.com. Image/OCR CAPTCHAs are among the cheapest solve types.

Process thousands of CAPTCHAs with CaptchaAI

Get your API key at captchaai.com.

Related guides

Full Working Code

Complete runnable examples for this article in Python, Node.js, PHP, Go, Java, C#, Ruby, Rust, Kotlin & Bash.

View on GitHub →

Discussions (0)

Join the conversation

Sign in to share your opinion.

Sign InNo comments yet.